Yuan Qing

yq2379 [at] columbia [dot] edu

My research interests lie in multi-modal learning and perception, with an emphasis on developing unified visual and sensory representations that enable intelligent systems to understand and interact with the physical world. I am particularly focused on advancing the training efficiency, interpretability, and systematic evaluation of large-scale multi-modal foundation models, toward building generalizable and robust perception systems for real-world applications.

Bio

I am an incoming CS PhD student at Rutgers University. I was a visiting student at Boston University, working with Prof. Boqing Gong. I received my M.S. in Electrical Engineering at Columbia University, where I worked with Dr. Jingxi Xu. Previously, I obtained my B.Eng. in Electronic Information Engineering at UESTC, advised by Prof. Lixin Duan.

Publications

* Equal contribution

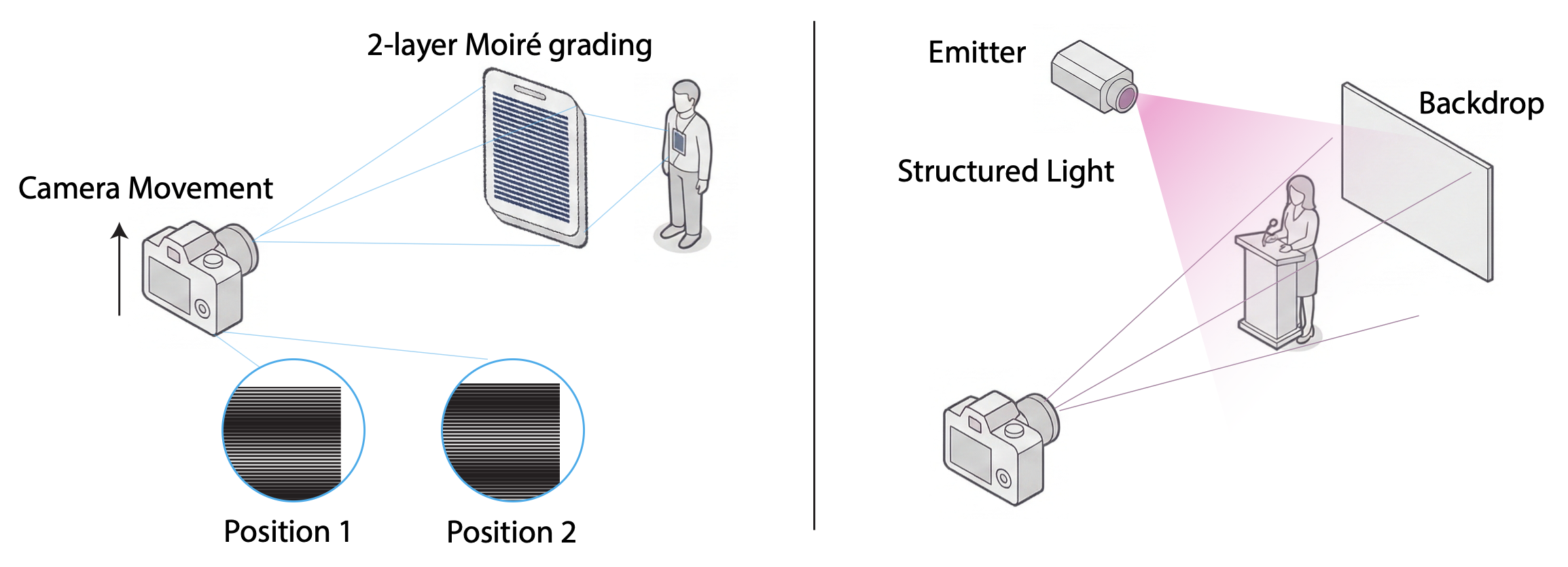

Yuan Qing*, Kunyu Zheng*, Lingxiao Li*, Boqing Gong, Chang Xiao

arXiv 2026

arXiv Project Page

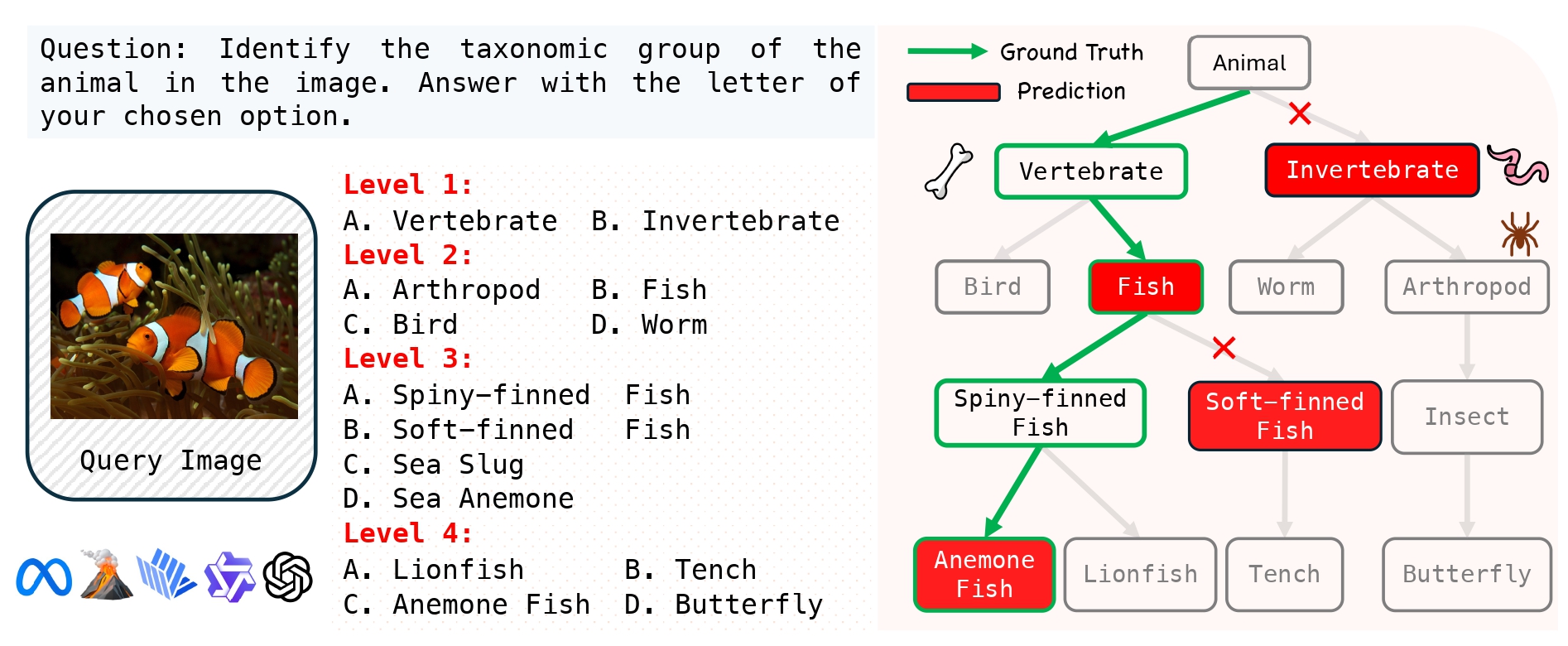

Yuwen Tan*, Yuan Qing*, Boqing Gong

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026

arXiv Project Page

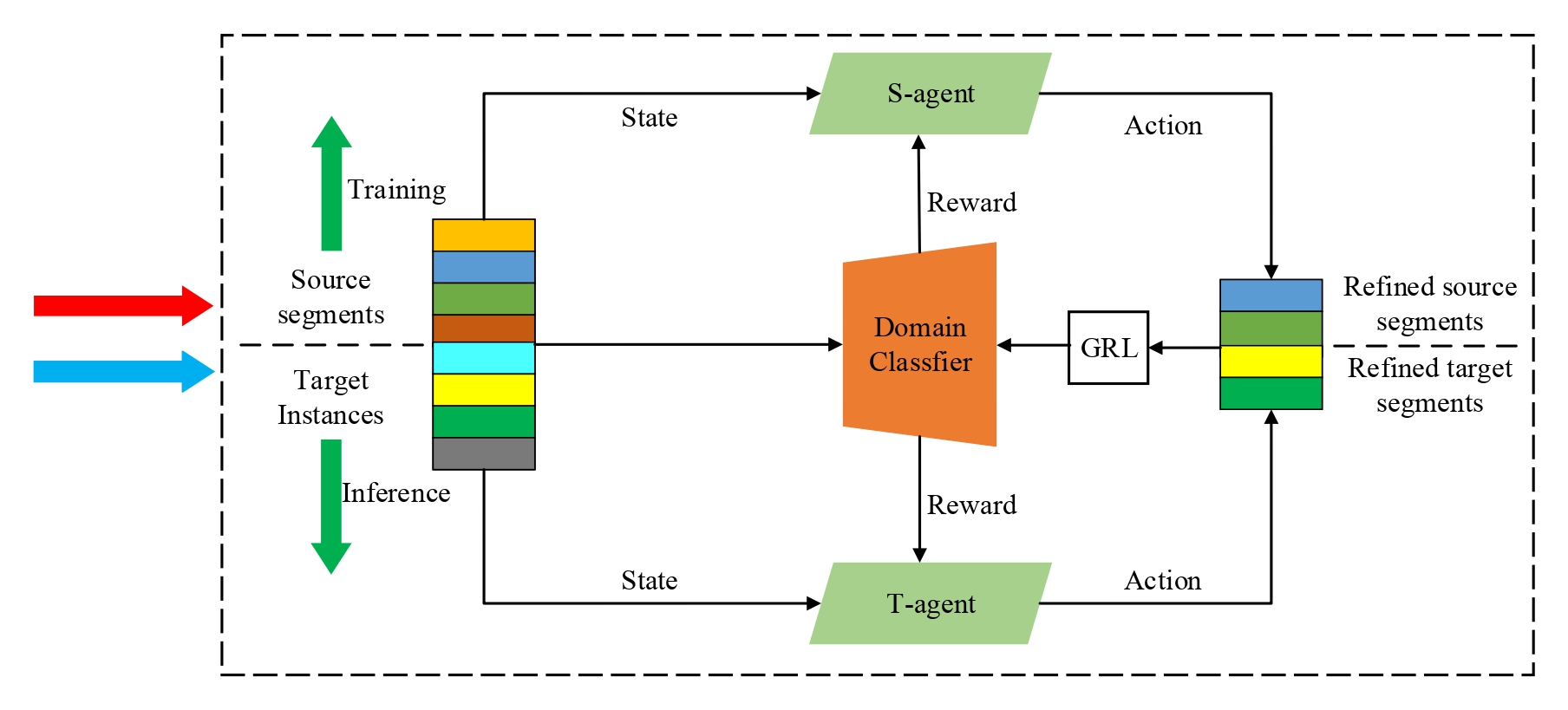

Yuan Qing, Naixing Wu, Shaohua Wan, Lixin Duan

Chinese Conference on Pattern Recognition and Computer Vision (PRCV), 2023

arXiv

Projects

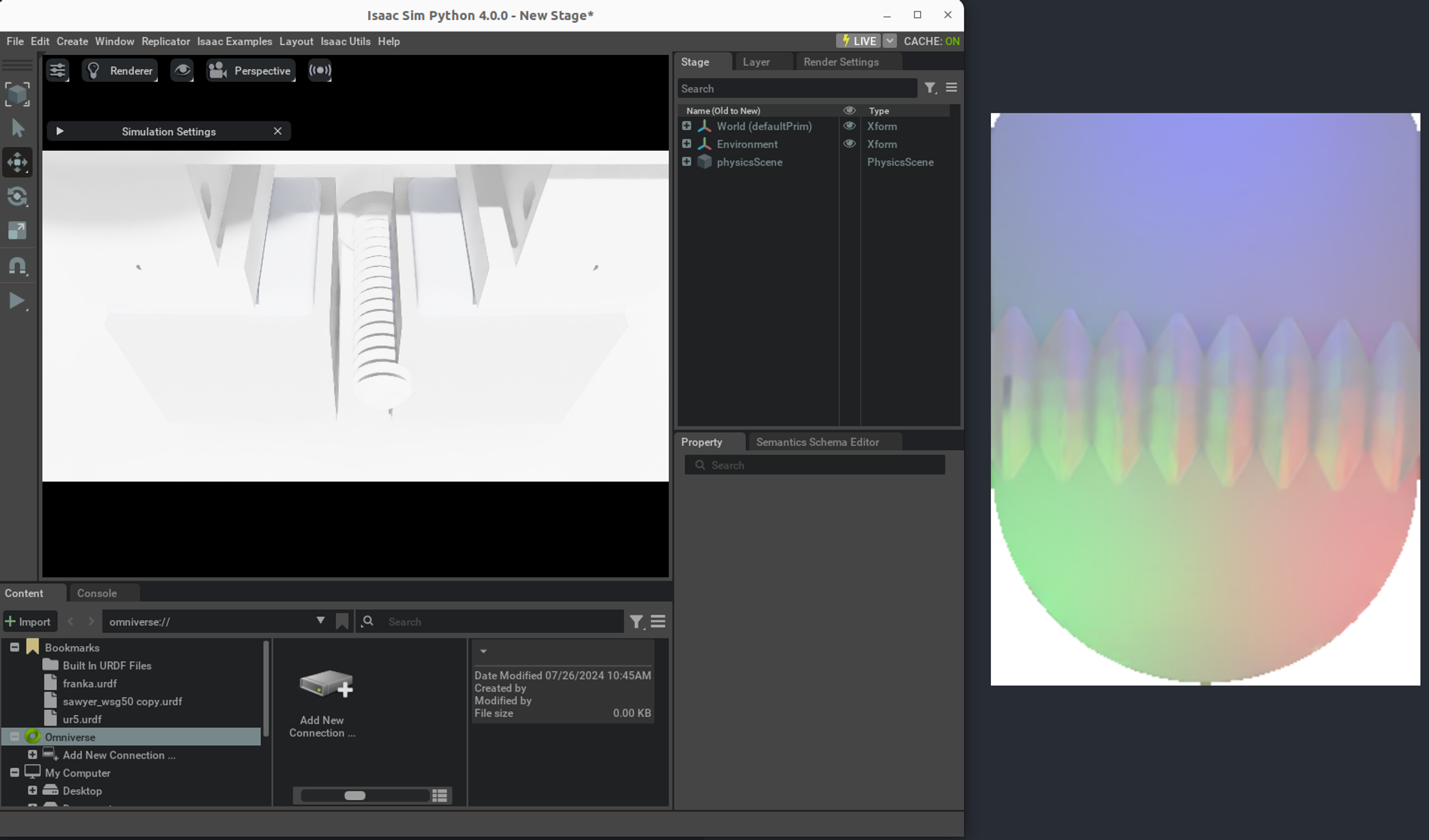

Learning Tactile Policies for Complex Manipulation Tasks in Simulation

A demo tactile simulator tailored for DIGIT fingers in IsaacLab.

Selected Honors

- Master of Science Award of Excellence at Columbia, 2025

- M.S. EE Honors Student at Columbia, 2024

- Outstanding Undergraduate Thesis of UESTC, 2023

- Outstanding Graduate in Sichuan Province, 2023

- Undergraduate National Scholarship (top 1.6%), 2020